Why AI-Generated Writing Is Boring

I'm encountering more and more writing where I can tell within a few sentences: "this person just handed it off to an LLM."

Excessively polished, with phrasing I've read somewhere before. Once I notice it a few lines in, my eyes glaze over and I can barely finish it.

An LLM is "a model that predicts the next word from the given context." If the context you feed it is ordinary, the output will be ordinary too. The corollary: if you give it your own unique experiences and perspectives as context, the output will reflect them.

In other words, the problem of boring output is not the model's fault. It's the user's.

How Uncool "I Wrote This By Hand" Is

As the recognition of "LLM-style writing is boring" has spread, more and more articles now open with disclaimers like "I wrote this article myself, without AI." (Honestly very human behavior.)

This is frankly embarrassing.

By saying it, you're essentially admitting that your own writing is indistinguishable from AI output.

The actual goal should be writing that is unmistakably, obviously yours — to anyone who reads it. And then just publishing it without preamble.

Use AI to Write — Just Use It Right

The problem isn't the tool. It's how you use it. If handing it all over is the problem, then use it correctly.

I'm firmly in the camp of "use AI aggressively in writing." But that doesn't mean letting an LLM write freely. It means remaining the one who writes, while having LLM supplement with diverse perspectives.

- Refining the outline

- Filling in angles and strengthening the argument

- Catching typos and errors

- Previewing how a specific persona would read it

Used this way, AI can strengthen your writing. I've reached the point where I now write almost everything — professionally and personally — through Claude Code. (Including this piece.)

A Writing Enhancement Pipeline

I've built this process as a "writing enhancement pipeline" in Claude Code. Here's how my setup is structured — though the underlying approach can be applied with other AI tools as well.

- Subagent cluster:

article-outliner(outline creation),article-writer(drafting in my writing style),article-critic(critical review of logic and argument),article-reviewer(quality check),fact-checker(fact verification via web search) — each operates as an independent specialist role - Editorial team (

/blog-team): An editor, critic, reader representative, and fact-checker — four agents launch in parallel, review from different angles simultaneously, and consolidate their suggestions - Automatic validation: Every time I edit an article file, a hook automatically validates frontmatter and Markdown formatting. A safety net that prevents publishing broken content

- Full workflow as commands: The entire sequence — scaffold generation → outline → draft → editorial team → quality check → publish — is set up as a streamlined pipeline

The key insight is: AI shows its real power not in the "writing" stage but in the "reading, critiquing, and verifying" stages. Writing requires the author's unique experience and perspective — but critique and verification are objective, standards-based processes, and those are exactly what LLMs are good at.

You focus on sharpening the thing you most want to say. Everything else you hand off to AI.

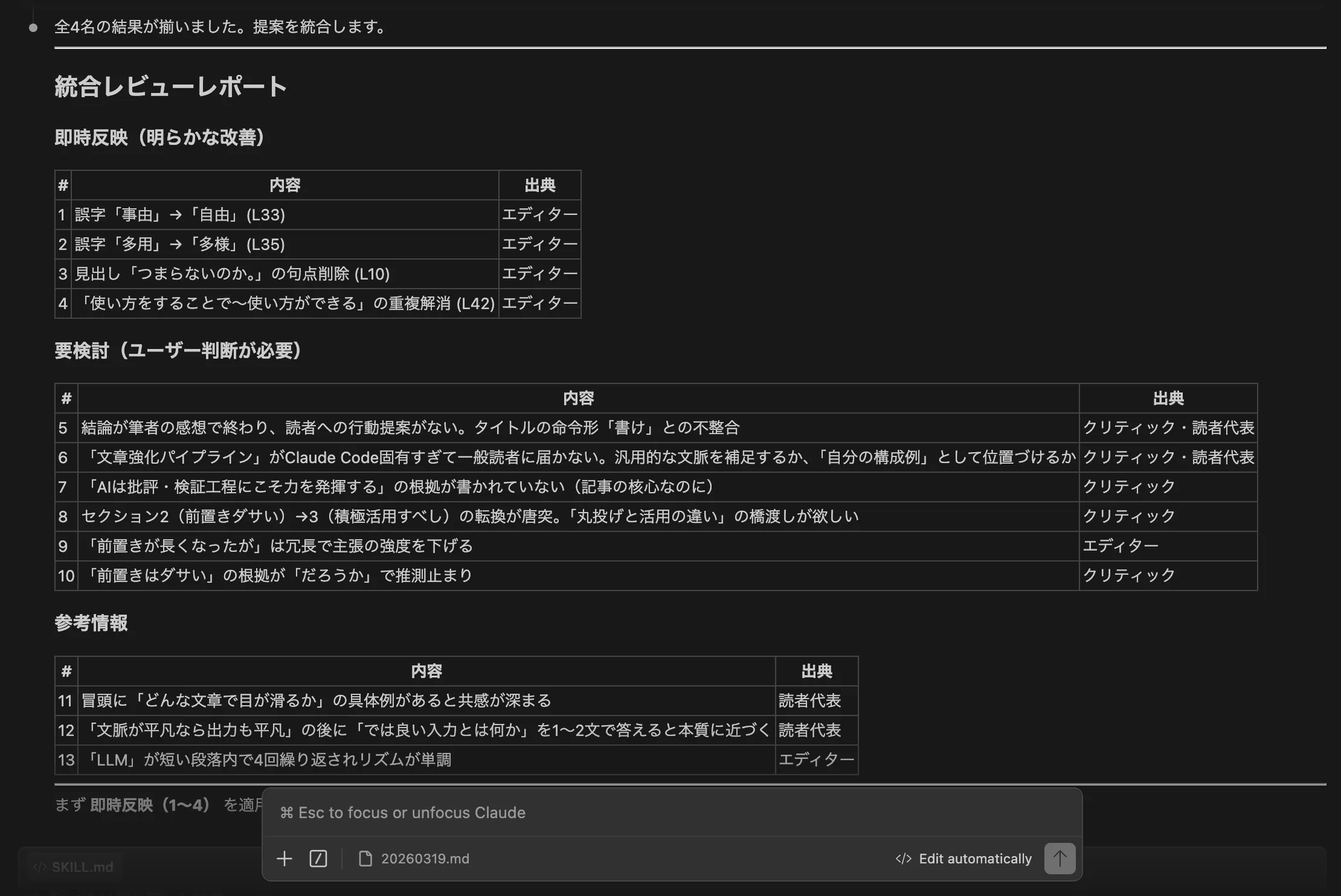

For example, during the review process for this article, the following kind of report came back, and I revised based on those notes:

Writing Is More Fun Because of AI

Now that AI can support the whole arc from first draft to quality review, writing has become more enjoyable and less daunting than before. And I've been able to feel genuinely satisfied with what I produce.

If you want to try this out: start by taking something you've already written and asking an LLM, "identify three logical weaknesses in this piece." That alone will change how you see your own writing.